This article discusses the results of a survey on why Microsoft 365 developers do not implement automated testing in their projects. I emphasize the importance of testing only one’s code, share my approach to testing, and offer to provide guidance on adding testing through feedback on Microsoft Teams, SharePoint Framework, and Microsoft 365 apps.

Survey Results

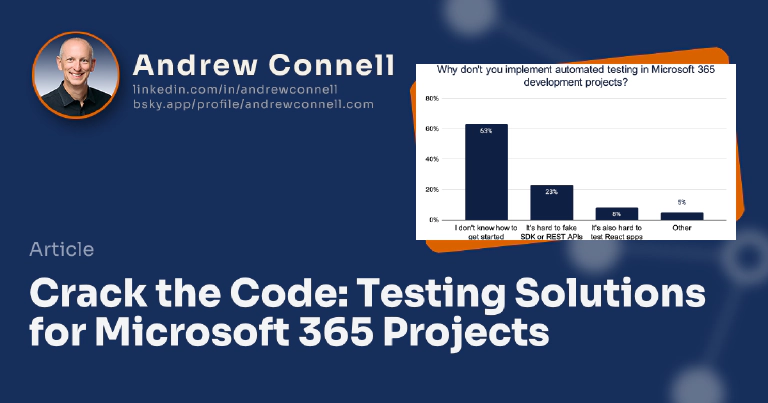

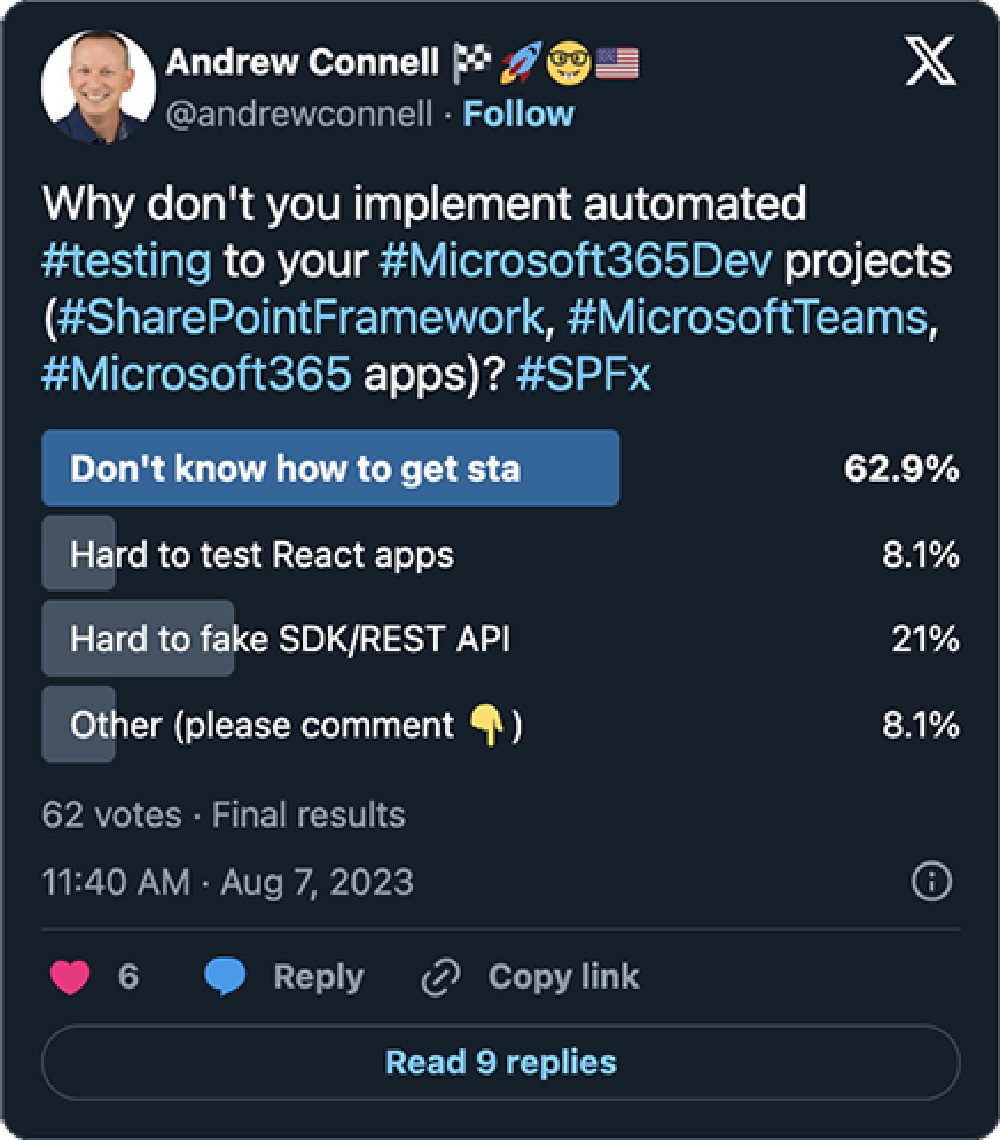

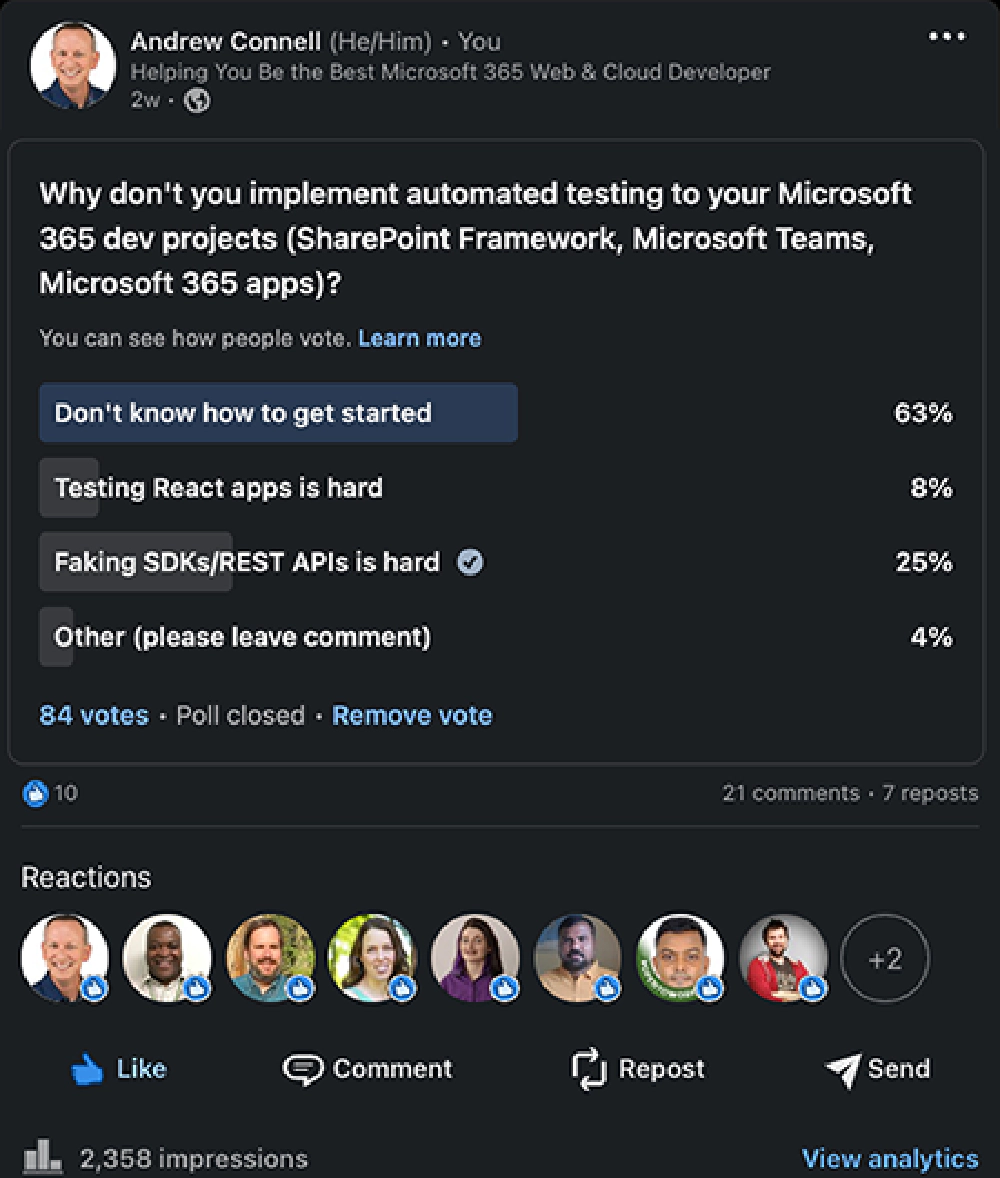

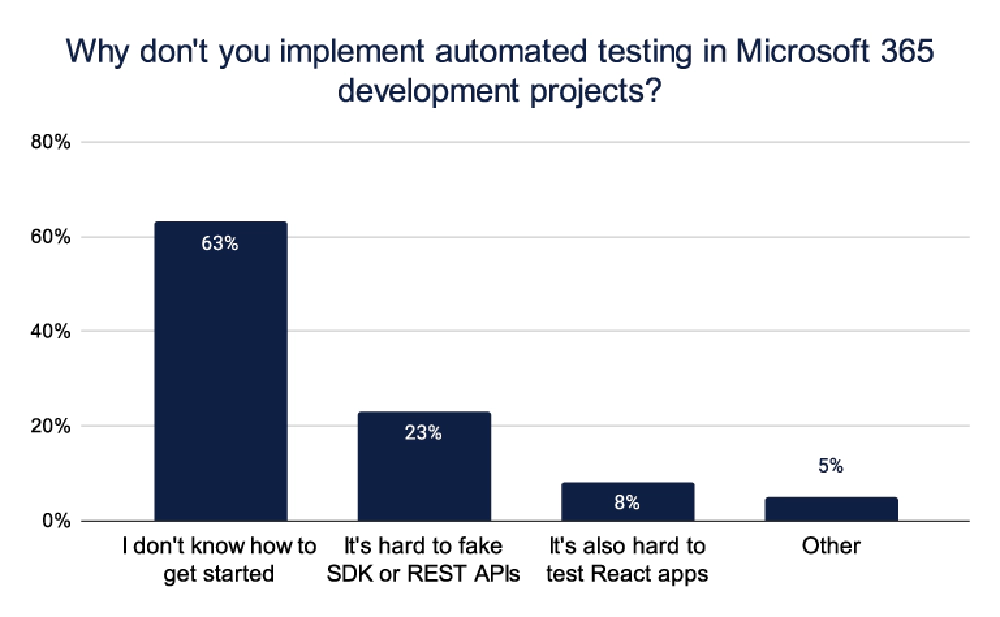

A few weeks ago, I posted two surveys (on LinkedIn and on Twitter/X), asking Microsoft 365 developers why they don’t implement automated testing in their Microsoft 365 development projects.

The survey asked developers about their experience and thoughts incorporating testing in their Microsoft 365 projects, including the SharePoint Framework (SPFx), Microsoft Teams, and building Microsoft 365 apps. The survey had three options to choose from, as well as an “other” category where they could provide additional comments.

The three options were:

- “I don’t know how to get started”

- “It’s hard to fake SDK or REST APIs”

- “It’s also hard to test React apps”

The responses were fairly predictable, but they also provided some interesting insights.

'Why don’t you implement automated testing in Microsoft 365 development projects' survey responses

I received numerous great comments from the survey. Here are some examples:

Reshmee and many others mentioned that writing tests for Microsoft 365 apps takes twice as long, resulting in low productivity.

'Writing tests takes too long'

Sandro pointed out that testing the relationships between front-end and back-end is difficult. It’s also challenging to determine if the data stored and returned from different services is what’s expected.

'Integration testing is difficult'

Razvan wonders why some SharePoint developers think software engineering principles don’t apply to SPFx dev. This aligns with previous posts on my newsletter, where I recommended building web apps instead of just Microsoft 365 apps.

'What's so special about SPFx development WRT testing?'

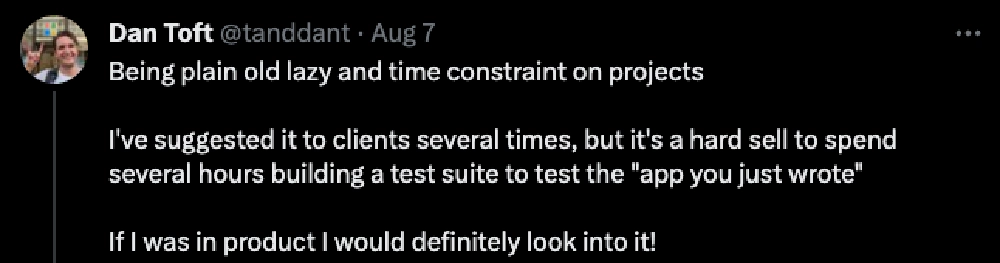

Dan thinks that the business criticality of many SPFx apps is so low that testing might not be necessary.

'Is testing only for business critical apps?'

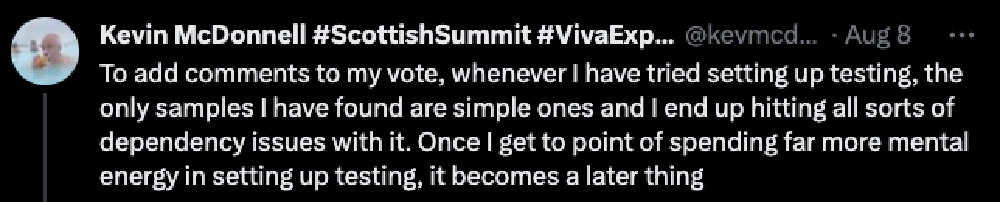

Kevin noticed that many sample automated testing methods are too simple, and when implementing them in your project, you encounter roadblocks with project and real-world dependencies.

'Samples aren't realistic and too simplistic'

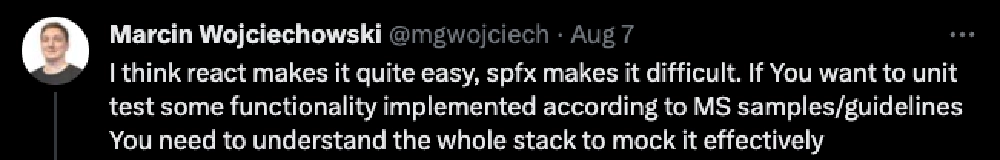

Both Kevin and Marcin experienced dependency hell when it comes to testing.

'Testing Microsoft 365 apps is a step into Dante's dependency hell'

It's Not Too Late to Vote!

If you missed out on that survey I’ve created an anonymous form so you can add your votes to the tally through September… I’ll update this article when the votes come in. Take the survey & add your thoughts!

Care to add your thoughts? Pile onto the existing discussions on Twitter/X, LinkedIn, and Threads!

Different Types of Testing

Let’s take a moment to identify some of the different types of testing available to us in our projects.

Unit Testing

Unit testing is the most basic kind of testing, where you test out your code. It is atomic and tests only one component. For example, if you pass in two numbers to a method called add(), it should return the result of adding those two numbers. A unit test would validate you get the expect result (5) when passing in specific values (2 & 3).

Integration Testing

Integration testing ensures that components work well both individually and together. This is when you try to test out an entire scenario or many different components together. These tests can take a lot of time to write and can get complicated.

User Experience & End-to-End (E2E)

End-to-End testing evaluates the product as a whole from the user’s perspective which is why it’s also called user experience testing.

E2E testing can be challenging as it can also be very brittle, especially in a hosted solution like when something is running inside of SharePoint or Microsoft Teams. These tests can expose issues that have been introduced without you making any changes, allowing you to address them before your users report them.

Key Points to Testing Projects

When it comes to testing, there are some key points to keep in mind. Remember: testing doesn’t have to be an all-or-nothing proposition.

You should only test your code, not other libraries or external dependencies.

Unexpected scenarios should also be handled by your code.

Should You Add Unit Tests to All of Your Apps?

One of the big questions when it comes to testing is whether to add unit tests to all of your apps. It can add extra development time to your projects, but it’s a good practice to strive for.

How I Approach Testing

I strive to add unit testing to all of my apps and keep it simple by only testing my code. I don’t test any calling of dependencies or call remote services. Instead, I fake or mock up the response that I get from these different dependencies that I’m going to use in my Microsoft 365 apps.

I love the idea of things like end-to-end testing and integration testing, but I generally don’t do them unless it’s a very complicated component. I like to use tools to simplify my testing experience, like the VS Code extension Wallaby.js, which runs only the tests affected by the code I changed.

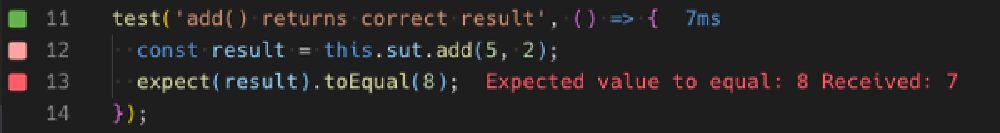

Wallaby.js gutter indicators of test results in real-time

What’s cool about it is that it doesn’t interrupt my flow. I don’t have a separate console running or things running in a hidden window. Instead, I like to see little visual indicators in the gutter of my code, either in the test itself or in the actual code of my project, showing whether my tests have passed (green) or failed (red). This way, I can easily keep track of the system under test without any disruption.

It shows me when tests pass and fail immediately after I update my code or my test, so I can address things that are breaking or that I’m fixing as I’m working on them.

Learn More About Wallaby.js

Want to see Wallaby.js in action? Check out my article & associated video how I use it in SPFx projects:

📜 Article: Testing SPFx Projects with Minimal Distractions: Wallaby.js

📺 YouTube: Testing SPFx projects with Minimal Distractions: Wallaby.js

My Experience Adding Testing to Microsoft 365 Projects

In my experience of following this model, it doesn’t double the development time for my projects, as many comments suggested.

In fact, I’d argue that it can do the exact opposite: increase your productivity.

It speeds up development because I don’t need to run my project as often to see how code changes impact the app.

In other words, if I’m building a SPFx app, I’m not running gulp serve or using the popular spfx-fast-serve on the SPFx projects very often. In fact, I usually only do it once every few hours.

How I Add Tests to My Projects

I focus on running the plumbing for the user experience first, not creating user experience itself.

The tests are for the plumbing. Then, when I connect the user experience to the plumbing such as fetching data collections or wiring up events that are connected to methods, I’ll start adding in the user experience code. But that doesn’t necessarily mean I’ll add testing for my user experience.

Maybe I will, maybe I won’t. For any non-trivial app that’s a little more complicated, maybe I’ll add some tests for my React app to do things like simulate button clicks or simulate window.onload events to test the generated HTML of my React components.

Add Failing Tests for User Feedback Request

Now, when I share my app with others and they find something that breaks, or when I find something that breaks, the first thing I do is add a test to mirror the feedback I got, whether that’s the failure of something not working the way it should or finding a bug.

The test should then fail because until I write the code to make the changes to address the thing they found, my test shouldn’t pass. Then, once I have a failing test that simulates the spec of what they’ve defined, I go back and update my project to be able to pass the test. In other words, I fix the problem.

In other words, their feedback is treated as a feature or design request that I translate to a test as a spec for my project.

How Can I Help?

That’s just my approach, but I asked this survey because I really wanted to figure out how I can help address this problem that I think people have.

I want to address three of the questions that I asked in my survey and do it in a way that I can help you.

But before I get started on this work, I want to make sure the most important questions are being answered…

First of all, how do you get started?

I want to show you how you can add testing to your Microsoft Teams, your SPFx, and your Microsoft 365 apps. Then, I want to show you how to fake data service dependencies.

Are you using data from the SharePoint REST API and SharePoint Lists? Are you using data from Microsoft Graph?

Anything to add?

How do you fake/mock calling third-party endpoints?

I want to show you how you can mock that stuff up very easily. Additionally, I want to show you how you can test your React apps using different libraries and tools that are out there.

By doing so, my goal is to convince you that writing tests won’t double your development time. In fact, it will make you more productive not only when writing your app but also when addressing issues down the road.

Anything to add?

So, what do you think?

Does this sound like something you’d be interested in? If so, let me know.

Did I miss something?

Is there something else about testing Microsoft 365 apps you want to see?

Microsoft MVP, Full-Stack Developer & Chief Course Artisan - Voitanos LLC.

Andrew Connell is a full stack developer who focuses on Microsoft Azure & Microsoft 365. He’s a 20+ year recipient of Microsoft’s MVP award and has helped thousands of developers through the various courses he’s authored & taught. Whether it’s an introduction to the entire ecosystem, or a deep dive into a specific software, his resources, tools, and support help web developers become experts in the Microsoft 365 ecosystem, so they can become irreplaceable in their organization.