I admit I’ve left the impression I don’t think highly of vibe coding. That’s not quite right.

In my article My Thoughts on Vibe Coding vs. Agentic Engineering, I explained my philosophy: vibe coding is fine for throwaway personal tools, experimentation, and tools with a minimal blast radius. For anything that ships to real users, you keep engineering judgment at the center and let agents do the tedious work. But that begs the question: how do you actually decide in the moment what to hand to an agent and what to do yourself? That calibration is what this article is about.

At Voitanos, I ran live Microsoft 365 development workshops, multi-day, instructor-led sessions where students are building real SharePoint Framework (SPFx) solutions and Microsoft Teams apps in real time. I host them on a platform called Circle. It’s good software. I’m happy with it. But it has one workflow that used to eat me alive.

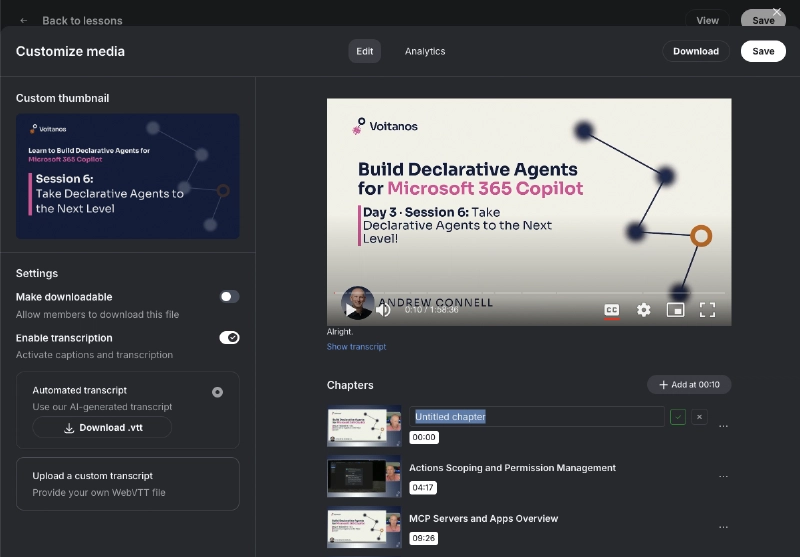

Every time I publish the recording of a live session, I have to add chapter markers to the video, like the little jump points you find in YouTube videos. The problem is their editor for adding those chapters is painful:

- You add them one at a time

- There’s no bulk paste

- There’s no import

Circle.so video chapter editor showing the manual one-at-a-time timestamp entry UI with no bulk import option

For a 90-minute lesson, the process of finding the chapter timestamps and manually loading them takes a LOT of time. With two recorded live lessons recorded for each day in the workshop, I usually fall behind by at least a day or few days getting those chapter links in there.

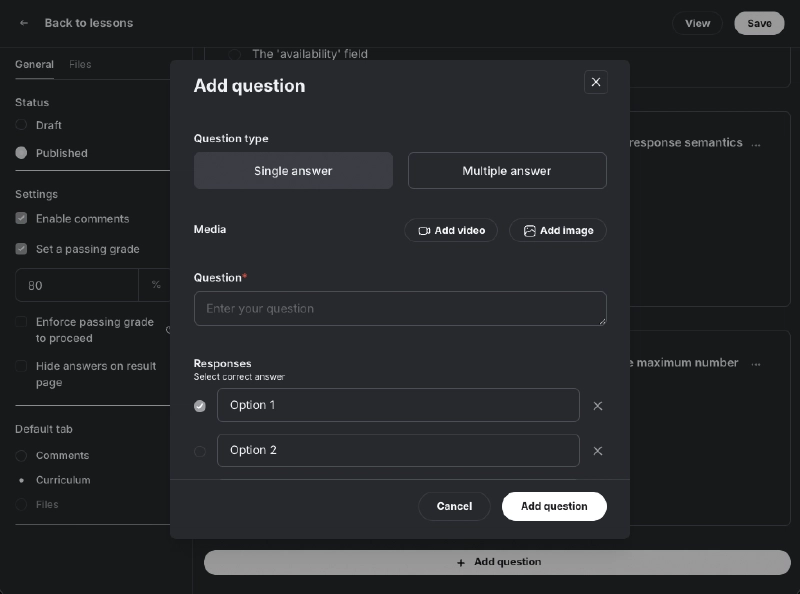

On top of that, every lesson module a knowledge check: a short quiz generated from the material. That means writing roughly 10 multiple-choice questions for the live lesson and all instructor-led demos in the module. Loading them was just as tedious as the chapters… jumping between the mouse & keyboard clicking “add question”, “add answer”, pasting

Circle.so quiz editor interface showing the step-by-step question and answer entry workflow

In addition to the chapter timestamps, I also needed a summary of the lesson and notes. I was already using AI to generate those for me, but I decided to level this process up.

If I recorded lessons on a Tuesday, I wouldn’t have them fully published until Wednesday or Thursday. I always felt like I was wasting my time on something that shouldn’t take that long.

Replicate your hands; don’t replace your brain

The rule: if the decisions are already made and what’s left is translating, saving, and clicking — that’s hands work. Automate it. If your judgment is still active in producing the output — if the thinking isn’t done — that’s brain work. Don’t automate it.

Everything else in this article is an application of that single distinction:

| Automate it (hands work) | Keep it manual (brain work) |

|---|---|

| Repetitive interactions with a bad UI | Architecture & design decisions |

| Converting output between formats | Core creative work that ships to users |

| Glue code between two systems you don’t care about | Domains you’re trying to learn |

| Pasting the same data into the same forms | Work where “good enough” isn’t good enough |

| Mechanical translation of decisions you’ve already made | Anything where your judgment is still shaping the outcome |

Let me unpack both sides with the Circle example.

How I automated my post-lesson workflow with AI agents

The complete post-lesson pipeline, from transcript processing, chapter generation, quiz creation, and Circle publishing, now runs in 5-10 minutes of active work. The rest is background processing I walk away from. Here’s what the workflow looks like and how I built each piece.

One day I finally took the time to explore how AI could help me speed this process up.

When I completed a 90-minute live lesson, I pulled the recording into Adobe Premiere Pro where I used the AI features to transcribe and enhance the audio for a podcast recording since it’s just dialog.

I get this process rolling within 5 minutes after finishing a lesson. It takes Premiere a while to do this, but this is done in the background while I quickly grab something to eat during my 30-minute break between live lessons. The process was finished by the time I finished my 2nd lesson.

After I kicked off the second lesson’s transcription & audio processing, I started the rendering of the first lesson and exported the transcript.

Here’s where AI comes in handy.

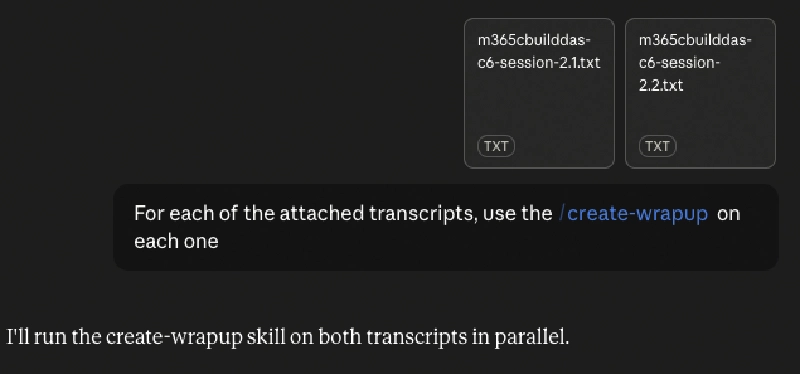

I created an agent that runs in Claude, just a markdown file, that does the following with the transcript:

Claude Cowork agent running the post-lesson transcript processing pipeline for a Microsoft 365 workshop session

- creates a short paragraph description of the lesson

- extracts the key topics covered in the lesson

- creates the chapter timestamps and title

- creates a bullet point summary of the lesson

- extracts the following with timestamps so I can quickly review them:

- student questions and my response

- links and resources I referenced

- encoded “note to self” when I teach (ie: when I see a typo in a slide, I say “FIX THAT [typo|bug|bullet point|etc]”)

At first I ran this with the Claude Code terminal client, but today I run it inside Claude Cowork (Anthropic’s project-based workspace in the Claude desktop app) so the agent retains context across the whole post-lesson pipeline.

After the markdown file is created, I review the chapters it suggested, make edits to the titles, and collect all the URLs to the resources I referenced.

## Summary

This session covers data storage options available for Teams apps,

comparing Azure hosted storage, SharePoint document libraries and

lists, OneDrive app folders, and SharePoint Embedded. You'll learn

the decision matrix for choosing the right storage option...

## Key Points

- Azure hosted storage offers maximum flexibility but requires

managing infrastructure, scaling, authentication, and compliance

separately

- SharePoint document libraries and lists provide built-in

Microsoft 365 compliance but are limited to team-scoped data and

don't scale well beyond 5000 items

- OneDrive app folders work best for per-user settings and

preferences with automatic cross-device sync but can't access

shared team data

- SharePoint Embedded stores isolated document libraries within

customer tenants for ISV scenarios while preserving copilot

indexing without counting toward storage quota

...

## Video Chapters

05:27 Platform Options

08:42 Azure Storage Details

14:37 Database Patterns

29:40 SharePoint Approach

37:41 OneDrive Storage

44:49 SharePoint Embedded

55:03 Embedding Billing

01:04:16 Decision Matrix

01:14:07 Samples & Close

## Notes

### Storage Challenge & Requirements

- Who owns the data: user, team, organization, or app itself

- Where is data accessed: single user, team members,

organization-wide, guests

- How is data queried: key-value, SQL statements, Graph

queries, full-text search

- Compliance needs: retention policies, eDiscovery, data

loss prevention, auditing

- Each question should guide toward appropriate storage platform

### Azure Hosted Storage

- Wildcard option with maximum flexibility and control

- Supports Azure SQL, Cosmos DB, PostgreSQL, Blob storage,

Table storage, message queues, Data Lake

- Client side apps should not write directly to Azure

resources (security risk)

- Pattern: Tab or app makes authenticated call to server

middleware

- Server middleware (Azure Function or Teams SDK function)

reads/writes to data store

- Can use single sign-on token validation to ensure only

your app can call endpoints

Copying and pasting the lesson summary, key topics, and AI notes was simple enough. What I needed was a faster way to load the chapter links in the uploaded lesson video. One day it hit me: I bet the Circle chapter editor was just a UX that saved chapters via some API.

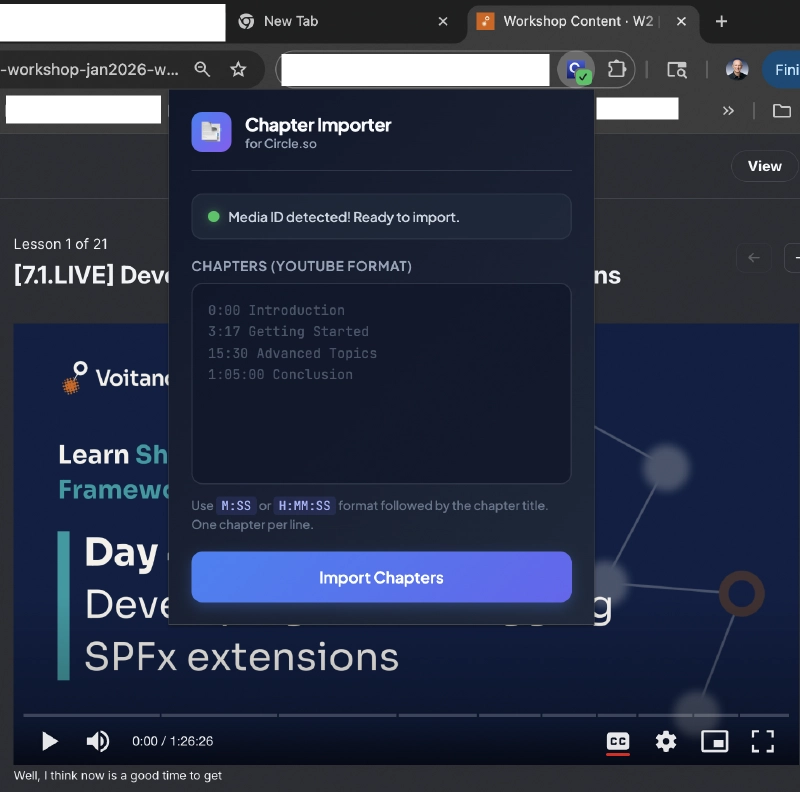

Vibe coding a Chrome extension to skip the bad UI

A while back I ripped the whole thing apart with a Chrome extension I vibe coded in about an hour or two. After an interactive back and forth with Claude Code and the Claude Chrome extension (so Claude it could inspect the traffic form the web app), we found the API call Circle was using!

I then put Claude Code into plan mode and explained exactly what I had and what I needed it to do. After a little more back and forth with AI, it executed the plan and created my first Chrome extension!

Custom Chrome extension UI showing the chapter timestamp import and quiz YAML upload interface for Circle.so

My new agentic workflow after teaching a lesson takes about 5-10 minutes of manual review & active for what can’t be automated:

long-running background}} F --> H{{Agent converts transcription

to markdown

long-running background}} G --> I[Rendered MP4 ready] H --> J[Markdown file ready] I --> K[Create lesson in Circle] J --> K K --> L[Upload MP4 to lesson] K --> M[Paste markdown into lesson body] L --> N[Paste chapters into Chrome extension] M --> O([Save lesson]) N --> O class G,H theme-asyncProcess class A,O theme-terminal

Rendering and transcription run as parallel background processes; one in Premiere Pro, one in a Claude agent. Their outputs converge at Circle lesson creation, which fans out to three paste/upload steps before saving. The full pipeline replaces what used to take an hour or two of manual work with 5-10 minutes of active review.

Many developers I talk to are getting this calibration wrong.

I then implemented a similar process for the module’s quizzes: an agent processes the transcripts for the live lesson & instructor-led demos, creates a batch of 10-20 questions with the approximate timestamp(s) in the lesson/demo when I answer in the question. After I review and edit them, the vibe-coded Chrome extension let’s me pick the YAML file that contains the quiz and uses the same pattern as the chapter import to load them.

Deciding what the lesson should teach in the first place? What the demos cover, the actual teaching and delivery of the content? No AI there… that’s all me… all brain. No part of that gets automated… never. That’s the work my customers paid me for.

The tasks most worth automating

The best automation candidates share a single trait: the decision is already made and what remains is purely mechanical execution. If you can describe exactly what the output looks like before you start, you’re doing hands work. Automate it.

Once you start looking at your week through this lens, the pattern becomes obvious. The best automation targets are the tasks where you feel dumb doing them. Repetitive interactions with a bad UI. Converting output from one format to another. Pasting the same kind of data into the same kinds of forms. Writing glue code between two systems you don’t care about but have to use every week.

Circle’s chapter editor is a bad UX for someone who has to do it a lot. The YAML format conversion was glue work. The repetitive paste-save-paste-save rhythm was mechanical. None of it was shaping anything I couldn’t shape in my head. All of it was just my fingers translating decisions my brain had already made.

Those are the tasks that deserve an afternoon of your time and, in my case, a small Chrome extension.

Should you maintain vibe-coded tools? A practical filter

Here’s a related filter I use. When I built the Chrome extension for Circle, I didn’t care how it was structured. I didn’t read most of the code. I still don’t know, nor care, exactly how it works. If it breaks, it only affected me… so the blast radius was minimal. In that case, I’ll have the AI fix it, or I’ll rebuild it.

Why? Because I have no interest in becoming a Chrome extension developer. That’s not a skill I’m trying to build. That’s not a domain I want to own. The tool exists to save me time, and if it stops saving me time I’ll throw it away.

This is a useful mental move. For any tool you’re about to automate, ask yourself: do I want to become good at this?

If yes, don’t automate it blindly. You’ll miss the learning. Build it the slow way, or build it with an agent but review every line and understand every choice.

If no, vibe code it. Ship it. Don’t look back. The whole point is that it saves you time to spend on the domains you do want to master.

When to stay manual: tasks AI shouldn’t replace

The other half of this calibration is knowing what to leave alone.

Anything where the output ships to other people gets the full agentic engineering treatment I wrote about in My Thoughts on Vibe Coding vs. Agentic Engineering.

Anything where you’re trying to learn the domain gets done by hand or, use a coding assistant but use it to learn like Claude’s explanatory and learning modes. If you’re trying to become competent at Microsoft Graph permissions, don’t ask an agent to just generate the auth flow and move on. You have to type it, break it, and fix it to understand it. Just letting the coding assistant to everything bypasses the learning process.

Anything where “good enough” isn’t good enough stays manual. If the cost of a small error is high, or if the work benefits from fresh creative attention every time, protect it from the automation instinct. Writing a course script, for example. I don’t use AI to draft lessons. That’s the core creative work. I use AI to handle everything around the core creative work so I can spend more time on the lessons themselves.

What changes when you get this calibration right

Applied consistently, the hands/brain distinction has two compounding effects: you recover hours you didn’t know you were wasting, and you develop an instinct that keeps finding more time to recover.

When I started applying this test rigorously, two things happened.

First, I got a lot of time back. The Circle workflow alone saves me roughly ten hours a week when I’m teaching a workshop — what used to spill into Wednesday or Thursday now finishes the same evening. Scale that across a dozen similar automations across my business and it’s transformative.

Second, and this is the less obvious one, I started to notice the tedium more. Before, I’d just grind through whatever the work required. Now I catch myself in the middle of something mechanical and think “wait, this is hands work, why am I doing this by hand?” That noticing is the actual skill. The Chrome extensions and scripts are downstream of it.

If you’re a developer working in the Microsoft 365 (M365) ecosystem — which is where I spend most of my time at Voitanos — the same instinct applies. Every week has some amount of boilerplate:

- Scaffolding SharePoint Framework (SPFx) solutions and bumping their dependencies between versions

- Adding single sign-on (SSO) support to a Microsoft Teams app or a Microsoft 365 Copilot agent

- Copying Microsoft Teams app manifests between projects and rewriting the IDs

- Formatting Microsoft Graph query results into a report your stakeholders will read

Most of it is hands work. None of it is where you add value. All of it is a candidate for a small, focused automation you can vibe code in an afternoon.

Start paying attention to the moments in your week where you feel dumb doing what you’re doing. Those moments are telling you something. The answer isn’t always to build a tool. Sometimes the task is fine. But often there’s a ten-minute agentic engineering session between you and getting hours of your life back.

Polaris Pathways Podcast: Good (AI Coding) Vibrations

If you liked this, you might be interested in a discussion I had with Chris McNulty captured in his podcast, Polaris Pathways Podcast in episode 50 Good (AI Coding) Vibrations.

Microsoft MVP, Full-Stack Developer & Chief Course Artisan - Voitanos LLC.

Andrew Connell is a full stack developer who focuses on Microsoft Azure & Microsoft 365. He’s a 22-year recipient of Microsoft’s MVP award and has helped thousands of developers through the various courses he’s authored & taught. Whether it’s an introduction to the entire ecosystem, or a deep dive into a specific software, his resources, tools, and support help web developers become experts in the Microsoft 365 ecosystem, so they can become irreplaceable in their organization.